Trace every agent.

Every decision.

Every failure.

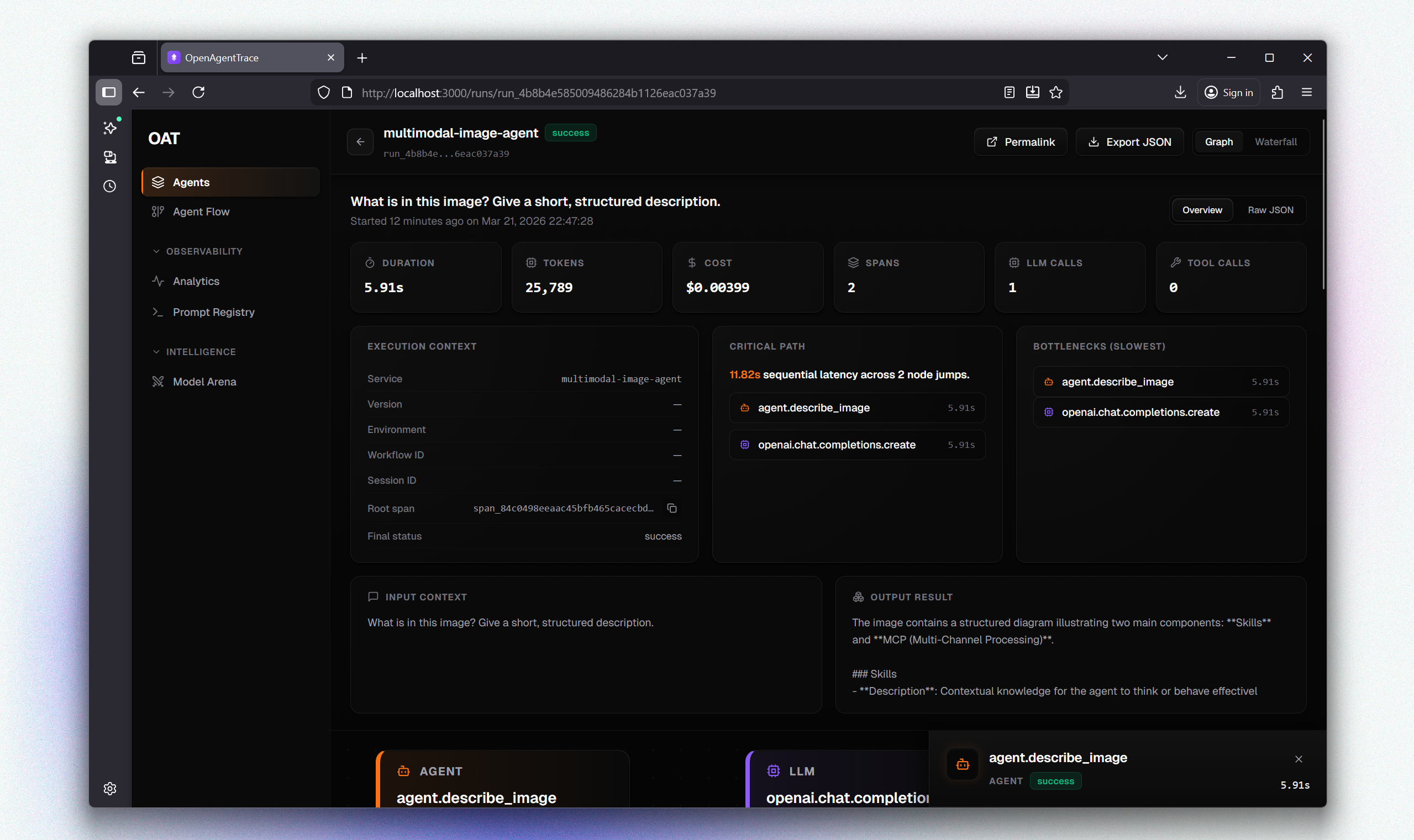

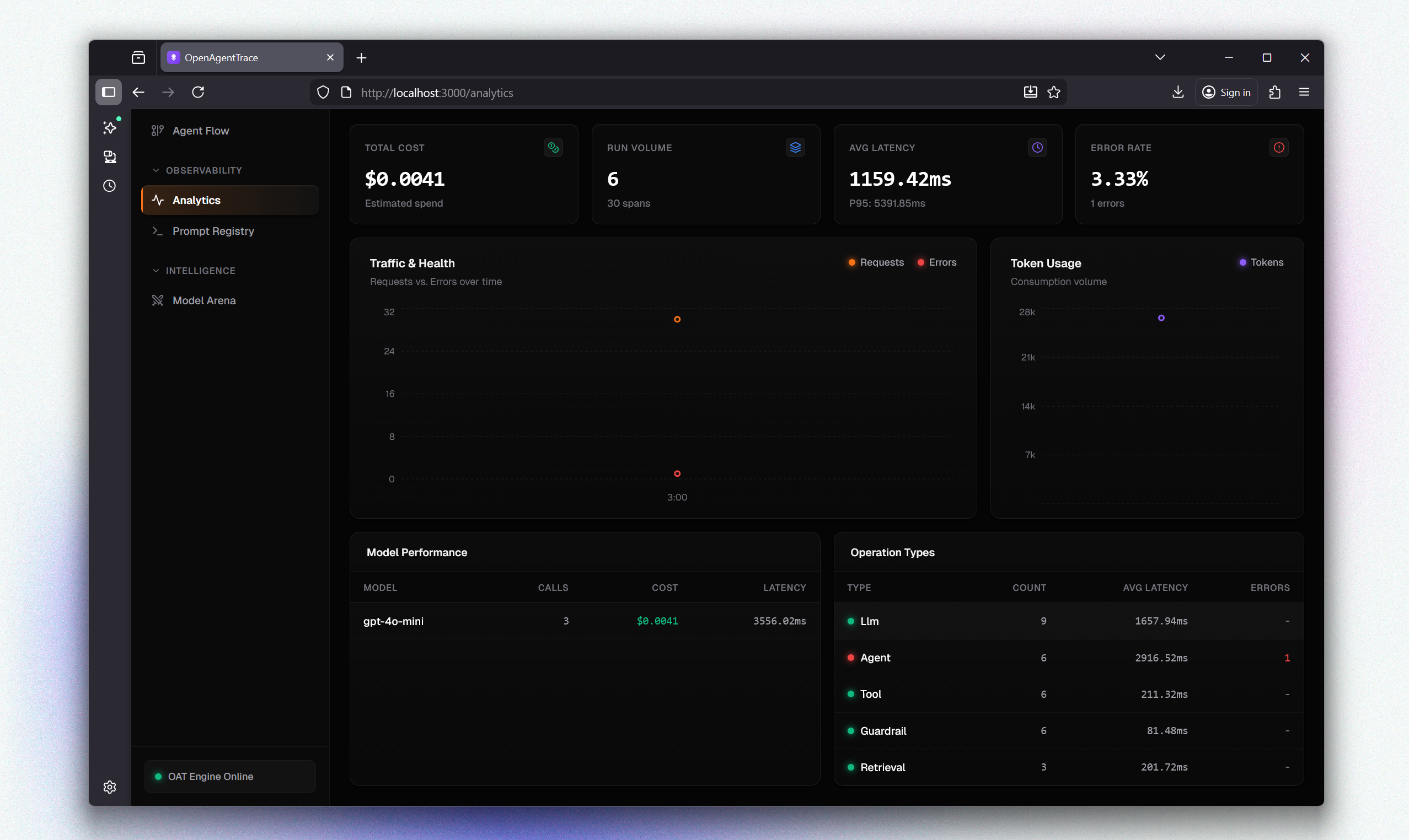

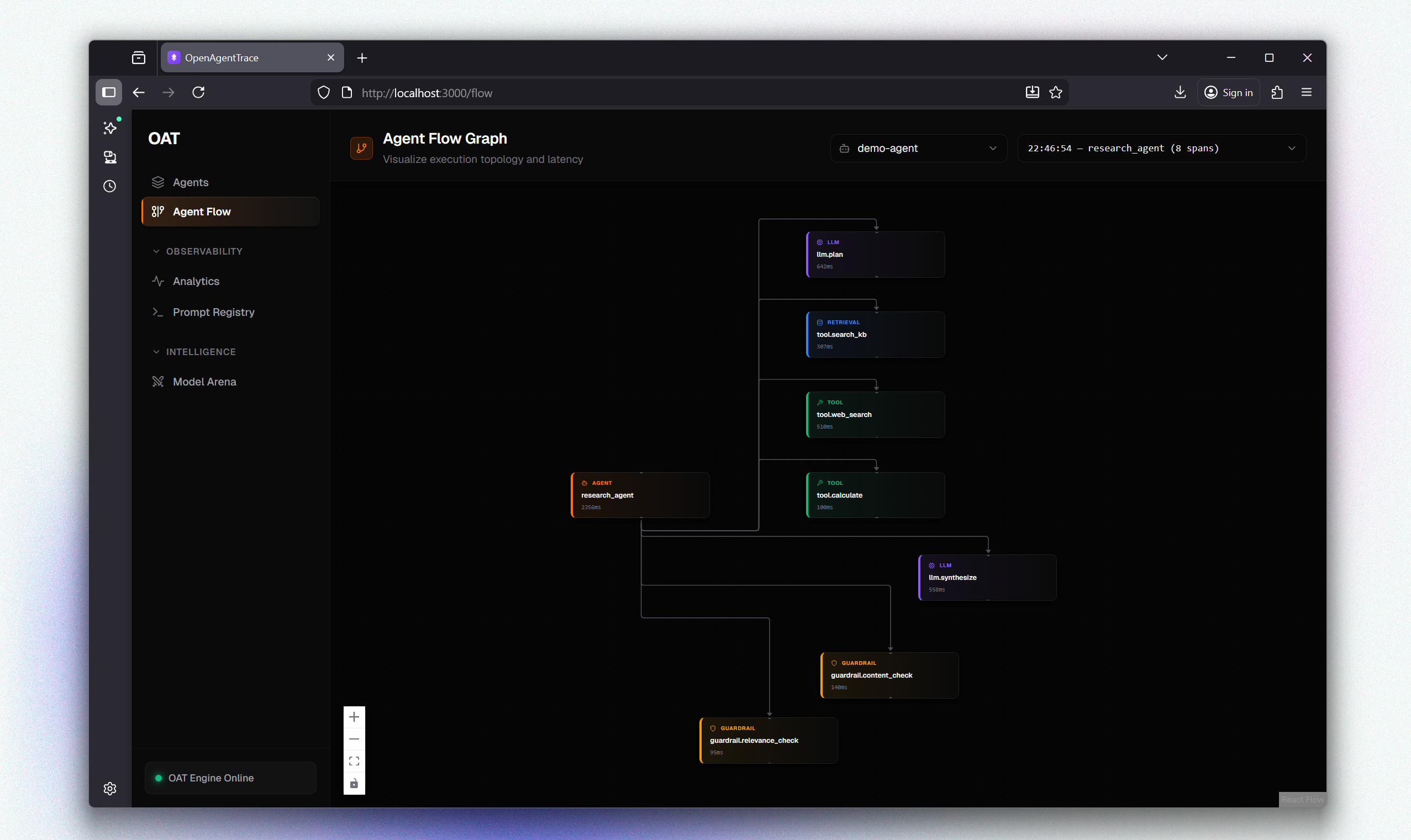

Free and open-source observability for AI agents. Stop debugging black boxes with full execution visibility across prompts, tools, retrieval, artifacts, costs, and failures.